March 1, 2023

When it comes to application development, I think we could all agree that the push for automation is stronger than ever. We’re seeing greater demand on the business side for features to be released faster and with higher quality, and that’s prompting more calls on the technical side to automate, automate, automate. And although more automation sounds great in theory, it’s important to understand what’s really practical and realistic for a business when it comes to automation.

Automation can instill confidence to release software and improve the team’s ability to create high-quality applications in the fastest and most efficient way possible. Essentially, it eliminates the need to compromise or choose one set of priorities over another. Instead, it allows teams to strike a balance between confidence/coverage and speed/efficiency.

But automation isn’t a one-size-fits-all solution. Knowing what and how much to automate depends on a number of different factors. That said, the following best practices can help provide some guidance and inform your decisions when considering where and when to incorporate test automation.

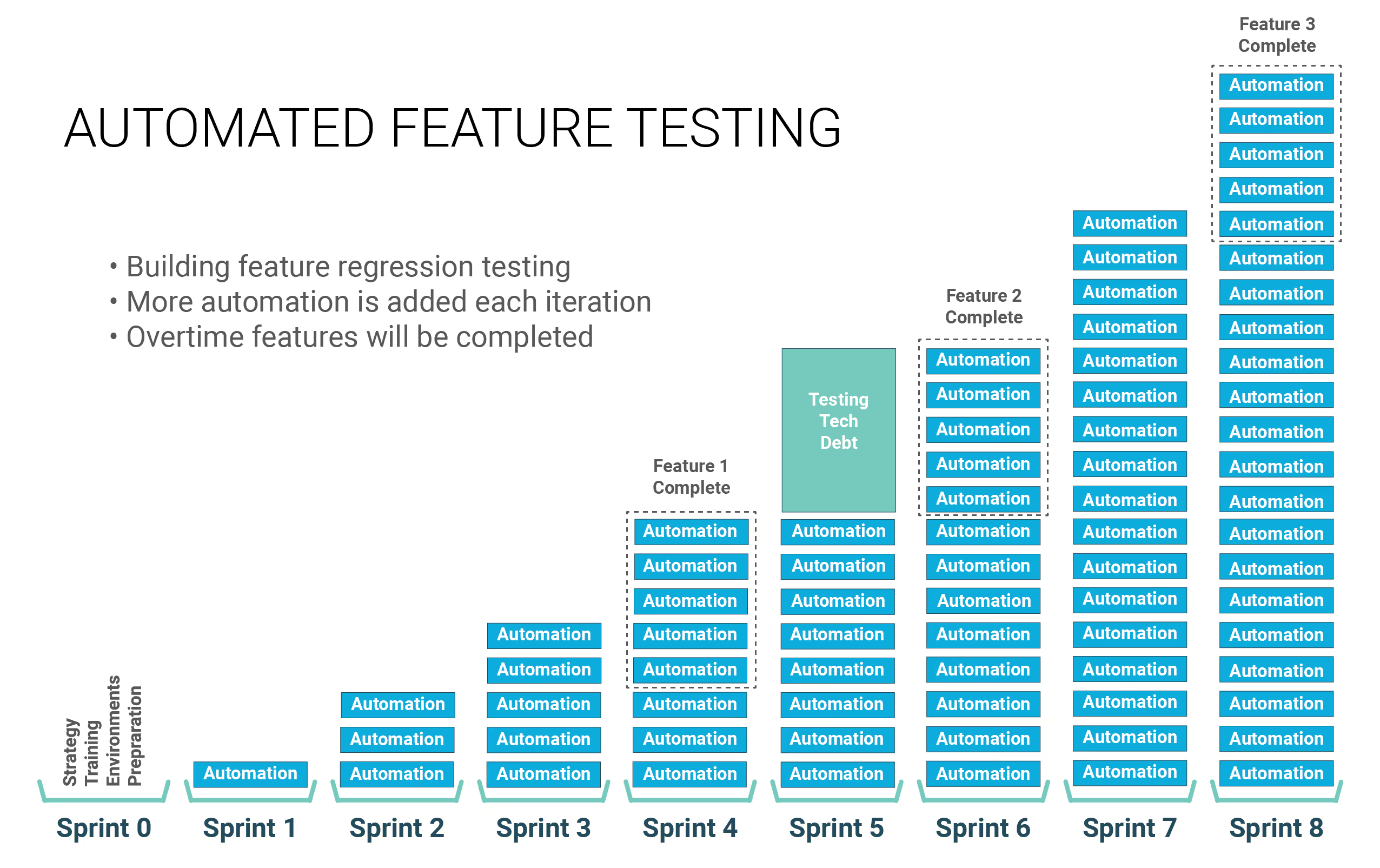

Treat automation like any agile application project. Break down the work into individual test cases, prioritize, and work down the list incrementally. It’s not meant to be a one-and-done fix that takes you from zero automation one day to all the automation you need the next. It's really an ongoing journey that you keep working on and refining. Start small and focus on racking up little wins. Get those in place and start building that confidence, so that tests can be executed early and often.

But let me be clear, this doesn’t mean start small and come to a screeching halt. We often see teams fail, in fact, for this precise reason. They put one toe in the water but never go further in terms of creating test automation. As a result, they never get enough out there to really see any real benefit. From my experience, you need to make the investment and automate enough tests to see lift in your manual regression testing and continue working on the highest priority items to maintain that benefit.

When you're starting a new project, the goal is in-sprint automation, meaning test automation is performed within the same sprint that the feature is developed. That’s the goal, but it’s not always feasible. So don’t make it a hard requirement so that stories fail. Also, on a new project, the UI is typically either not built or is changing a lot, so don’t try to add too much at the beginning. Ultimately, you want to get the team jelling and the velocity up, creating early wins at a sustainable pace.

Our recommendation is to start with sprint plus one or sprint plus X.

We recommend different approaches based on whether you’re building a new app or adding automation to an existing app. Here’s a quick overview of each scenario:

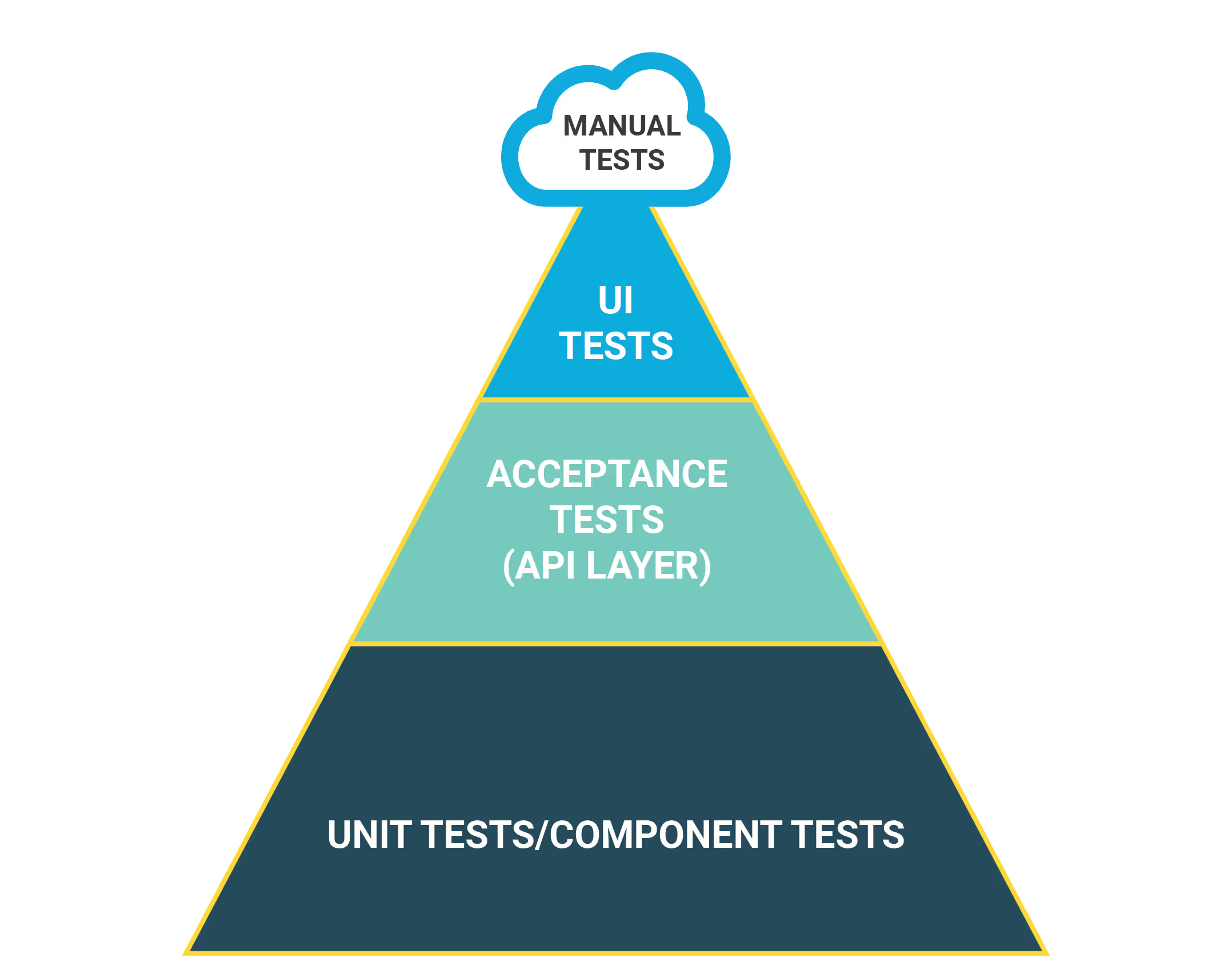

It’s best to take a bottom-up approach with a greenfield project. This means you start building tests from a feature standpoint. Then, eventually weave these feature tests together to form end-to-end testing. Early on, APIs are changing quite a bit, especially in the first few sprints. As a result, you want to stay nimble from an automation standpoint. Create some lightweight test automation that can easily change as the application evolves.

Also, keep in mind that in the first few sprints, the user interface may not be built out yet. As a result, don’t put as much emphasis on end-user or UI tests just yet. Keep the focus on the API and unit level tests first and then scale up as the UI becomes more stable.

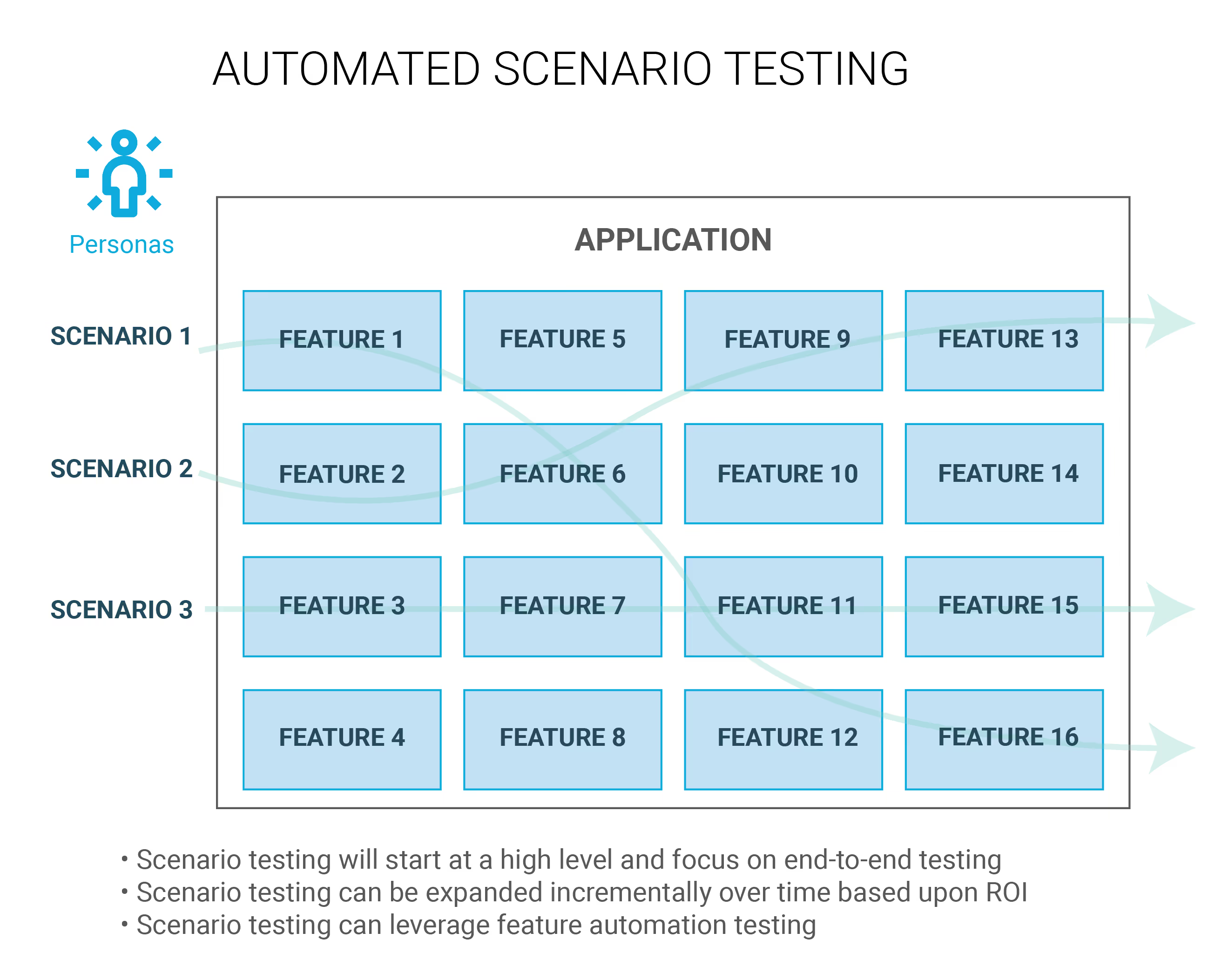

In this case, you want to focus on building out end-to-end tests—those happy paths—all the way through the application. The goal is to touch as many features as you can for comprehensive coverage, with the minimum number of tests, to increase confidence that you can then deploy the solution with quality.

This approach is ideal for software already in development, where you need to fill in the gaps by expanding test coverage. For an application that’s ready to deploy, focus on building the automation onto completed features.

Automation isn’t a silver bullet. Projects can still struggle without the right plan in place to ensure the quality of the application through the lifecycle of the application. The takeaway is that good design is important and needs to be identified early in the process—typically, in sprint zero.

What comprises good design? Key elements include:

As I stated earlier, the bottom-line goal of test automation is instilling confidence. But don’t take it for granted. When tests fail, people lose confidence in the automated suite. That’s why I believe a failing test should always be everyone’s top priority. Drop what you’re doing, figure out what's wrong, and get it fixed. Either there was a requirement change, the developer broke something, or we have a data problem. Identify the issue quickly, fix it, and move on to the next so you can keep the confidence level high in your automated suite.

Don’t jump ahead too early when it comes to testing automation. Follow the testing pyramid to help produce higher quality faster. This means minimizing testing at the UI level early on. UI tests are the most brittle, slowest, and flakiest tests. Unit tests on the other hand are the fastest and don’t require deployment of the application, so they can be done as part of your CI build process. Unit tests help to test individual components to affirm the new feature works as expected. One you have high confidence in this, you can move on to more API and UI tests.

Work collaboratively with the business side to ensure the right things are prioritized. And, be sure to clearly communicate to the business side what is covered by automation and what is not. That way, everyone is on the same page in terms of what features are being requested, how they’ll be used, and what data is available to support or inform your decisions.

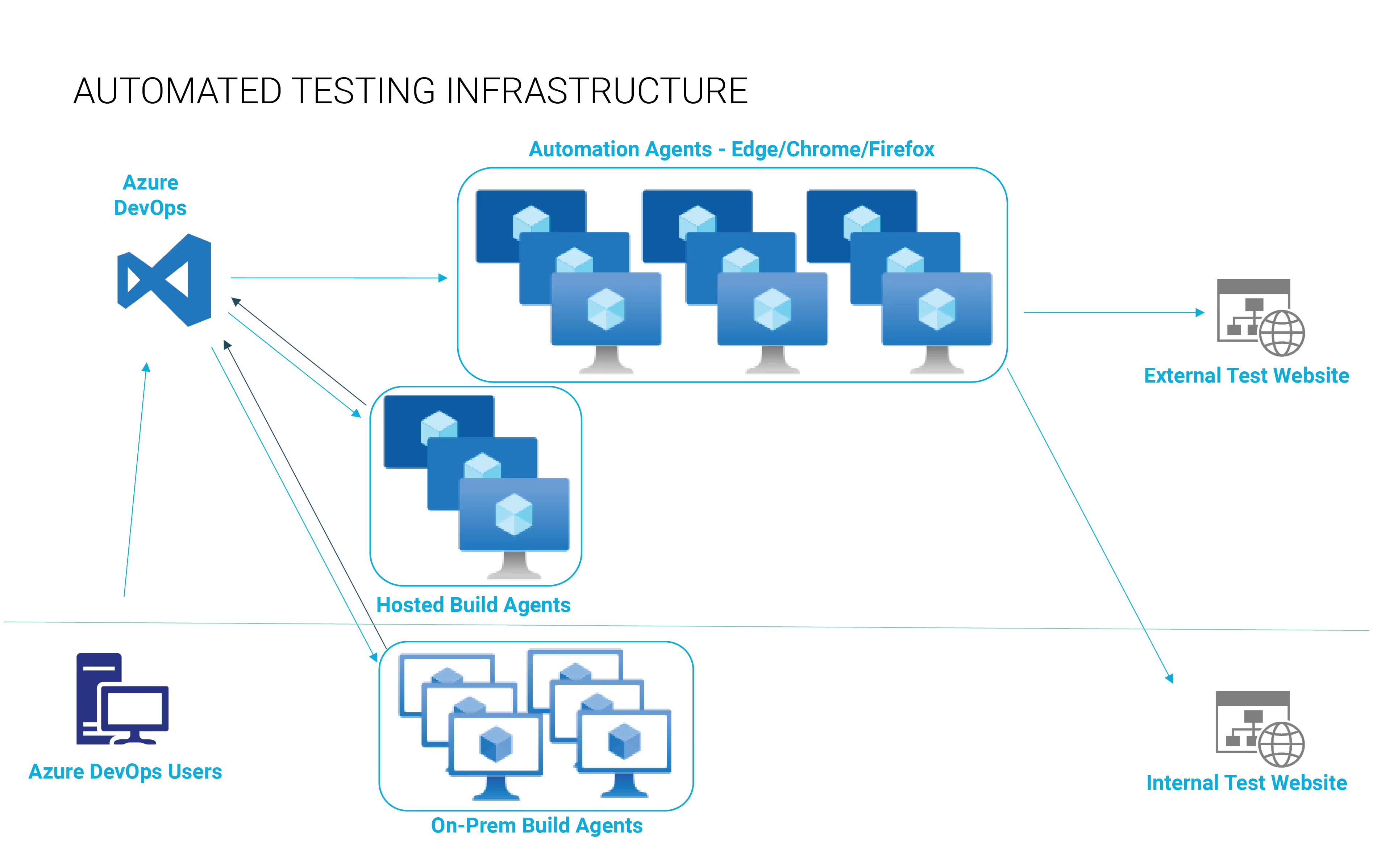

For cloud-native apps, the goal is to put as many tests in the application pipeline as possible to provide a robust quality gate. When building in automation, be sure to consider how long the tests will take. It’s not unusual, for example, for UI regression runs to take as long as three to four hours. If you put that into your application pipeline, developers will end up waiting the entire time. As a general rule, in your application pipeline automation shouldn’t take more than five or ten minutes. And the code change should be able to go from commit to production within 15 to 20 minutes—max. The goal is to fail fast and get that feedback quickly.

Cloud can be a big help on this front. When you spin up multiple agents—say 12 to 20 virtual machines at a time—they'll run through the tests quickly and allow you to scale out wide. You can then shut those machines down when they’re no longer needed. This helps keep costs low. Plus, you gain the added benefit of going wide and running those tests in the most efficient way possible.

Although there’s no one-size-fits-all solution for test automation, having a solid plan and leveraging these lessons learned can help improve your automation success and achieve the ideal balance between confidence and efficiency. Learn more about software testing automation services and best practices or contact our team to explore how Lunavi can help you support and advance your test automation goals.