.jpeg)

AI software development isn't new but it has been all of the talk recently. For the last few years, coding assistants have been helping to improve the speed and quality of software development. Last year newer agentic coding tools provided a shift from co-authoring between human and AI, to humans managing AI agents to do development. These tools had exciting promise but often fell short of huge productivity gains. At the time they also increased the time to review and required rework because it didn't quite work well.

In November 2025, New Frontier models were all released within 10 days - Google Gemini 3 Pro, Anthropic Opus 4.5, and OpenAI GPT-5.1. It didn't seem like pivotal moment when it happened as these vendors were releasing new models every few months. But these releases changed everything through the improved context window/handling and improvements in reasoning that has resulted in the leap forward coding abilities.

There are many ways to use AI to build software. Vibe coding, coined by AI researcher Andrej Karpathy [1] became a popular way to have AI write code because it allowed anyone with or without experience in building software with just some simple natural language prompts. This works well for micro apps and prototypes but falls short when building larger, enterprise systems. The prompts lack upfront planning and proper guardrails to ensure the software doesn't have bugs, security issues, or performance challenges.

The shift from vibe coding to agentic engineering is well underway. Karpathy himself moved away from the term "vibe coding" and now advocates for "agentic engineering" — emphasizing the art, science, and expertise involved. Organizations like Spotify report that their best developers haven't written a line of code since December [2], and the productivity gains are real. But speed doesn't mean quality. AI-developed code is being blamed for quality issues across public cloud [3] and other organizations. According to the Cortex 2026 Benchmark Report, PRs(pull requests) per author have increased 20% but incidents per pull request have increased 23.5% [4]. Too many people have been quick to throw out traditional software development practices because they might slow down development, but its important to measure the time it takes until everything is done right, not just how fast code can be developed.

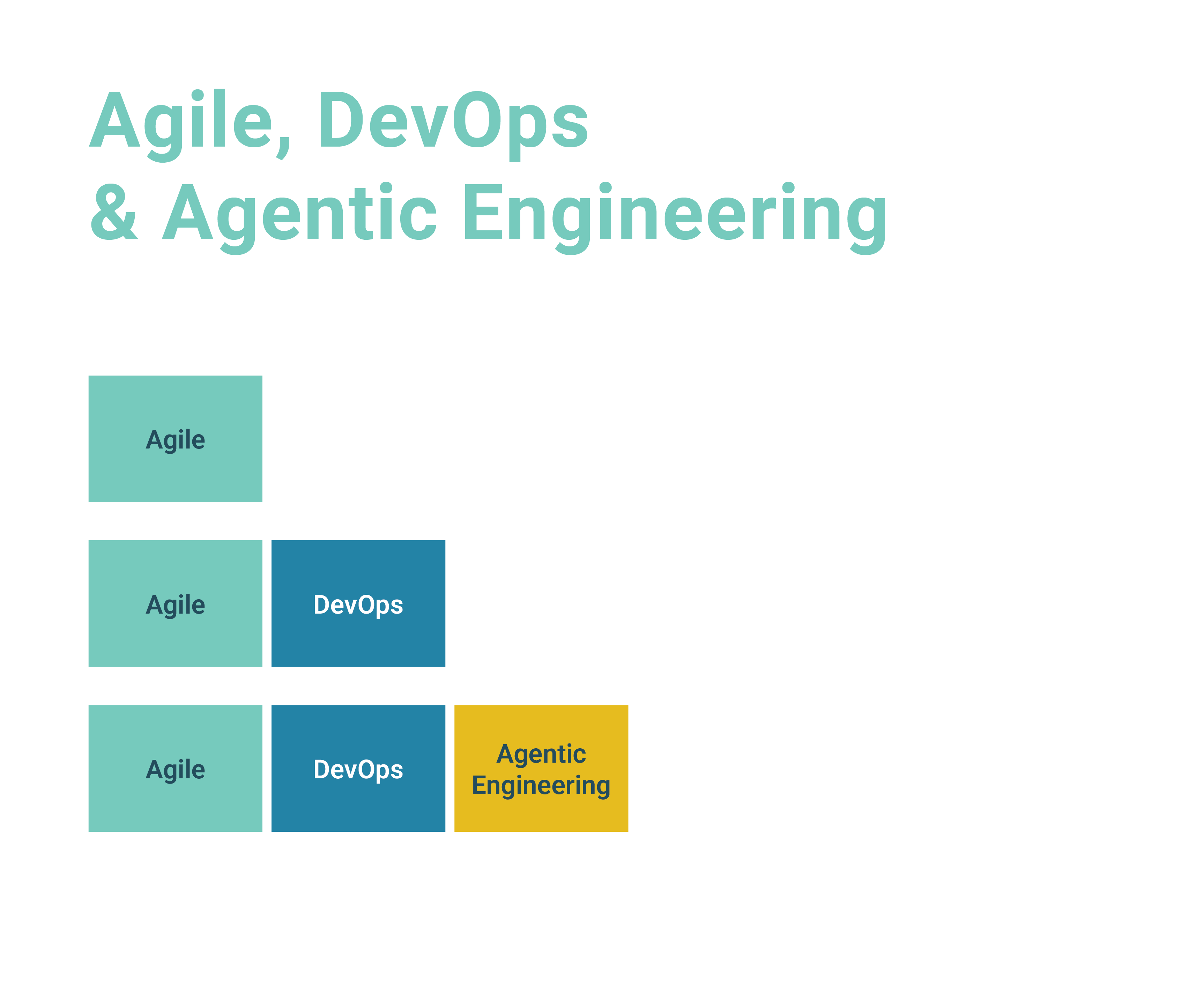

We have been using AI development for the past two years in various ways across all the roles of our delivery teams. Since these latest models, we have shifted to an Agentic Engineering approach where AI agents actively perform tasks, make decisions, and coordinate actions rather than just respond to prompts. Our unique approach to Agentic engineering uses Agile and DevOps practices to provide visibility and quality into the process while a human in the loop is still crucial. At some point in the future, we believe we can let AI go without human oversight where it won't make sense to use human development practices. While there is a human in the loop, Agile and DevOps practices that are good for humans are also good for AI.

Agile provides practices for breaking down work in to more manageable sized batches with a focus on functional, production ready quality. Even though AI continues to improve, it still works better with smaller work than larger work. This smaller batch also allows it to complete the workflow cycle faster, allowing a human in the loop to be able to review the progress from both the technical and functional perspective.

Good DevOps practices provide the tooling to automate quality controls throughout the process instead of manual gates at the end with a goal of being able to deploy 10 times a day. Overcoming these organizational constraints allowed teams to achieve the benefits of DevOps. Using Agentic Engineering practices will create more code more quickly and these controls become even more critical to have in place to ensure quality is maintained. Having traditional manual gates at each step, will lower or negate all efficiency gains. To maintain quality and speed while keeping a human in the loop, security, code quality, performance, and functional testing controls need to be automated.

Finally adding Agentic Engineering provides role based, skilled agents to perform planning, development, testing, and reviewing through the lifecycle of each batch of work. The separation of duties and role expertise are accomplished through separate sub-agents, each with own skills, commands, and context to focus on the activity, different agents can review and validate other agents output.

Measuring the speed and quality are the same metrics we have used before. Agile metrics like Velocity and Burndown give us visibility and predictability when the project will be completed. Even if it will be done in weeks instead of months, we will want to know when it will be done. DORA (DevOps Research and Assessment) metrics like deployment frequency and lead time are still important for measuring throughput. DORA has introduced a new metric called Deployment Rework Rate [5] that measures the ratio of deployments that are unplanned but happen because of an incident in production. This helps ensure teams are not just shipping junk faster.

The one thing we know for sure is that this will continue to change and evolve as new tools and models are released. There will be new ways we will learn to measure the quality and productivity of AI. Until then, don't abandon the past but combine the past and future to give you the best speed and quality.

Sources: